AI Backlash Is Not a Reason to Abandon AI. It Is a Reason to Abandon Unaccountable AI Adoption.

A couple of months ago, I was hanging out with a friend who subscribed to Time magazine. While waiting to pick up something from his store, I saw this amazing cover sitting on one of the tables. I asked him if I could have that magazine. I'm so grateful that he said yes.

Last night, while I was waiting outside a boardroom and had about 15 minutes to spare before delivering a meaningful discussion on AI for the executive people in the room, I finally made the time to read it.

I was immediately inspired to write this post.

And upon subsequent reads and really digesting the feelings, the intent, and the various perspectives laid out in the article, I came to the conclusion that TIME’s cover story “The People vs. AI” doesn’t read like a debate about technology. It reads like a referendum on how power moves when NOBODY feels they have a say.

Source: TIME cover story, Andrew R. Chow:

https://time.com/7377579/ai-data-centers-people-movement-cover/

If you are of the breed of human who considers themselves a leader, then consider this a signal to take seriously.

Not because the public sentiment is “anti-innovation,” and not because the ONLY alternative is to slow everything down. But because the current pattern of AI rollouts is teaching people that AI is something that happens to them, not with them.

And as one who advocates extensively for keeping the "human in the loop", I took it upon myself to offer an alternate perspective.

What I think the Public AI Backlash Is Actually Voting Against

The article profiles a widening coalition:

- Local communities fighting data centers

- Faith leaders worried about meaning and dignity

- Creatives pushing back on commoditized art

- Organizers worried about surveillance and state power

- Nurses insisting that safety-critical work cannot be treated like an efficiency exercise

Those viewpoints vary extensively.

The shared theme is not “machines are evil.”

The shared theme is loss of agency.

And we all know what it's like when human beings don't feel like they're in control, and especially if they approach it with fear and lack of understanding.

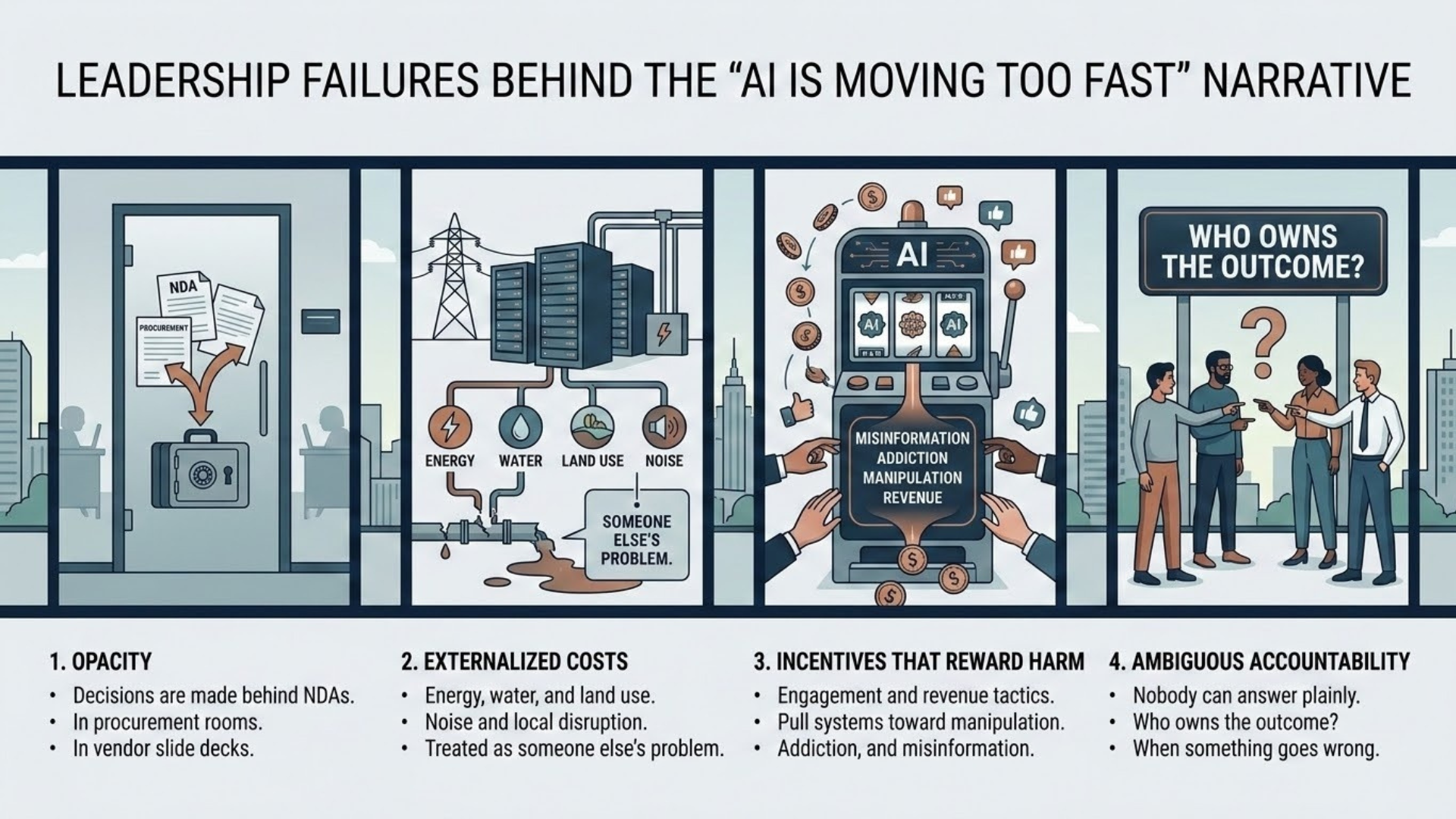

When people say “AI is moving too fast,” they are often pointing to 4 leadership failures:

- Opacity: decisions are made behind NDAs, in procurement rooms, in vendor slide decks.

- Externalized costs: energy, water, land use, noise, and local disruption are treated as someone else’s problem.

- Incentives that reward harm: engagement and revenue tactics pull systems toward manipulation, addiction, and misinformation.

- Ambiguous accountability: nobody can answer plainly who owns the outcome when something goes wrong.

If you want the benefits of AI without the social backlash, your job is not to “win the argument.”

My view is that it's your duty to correct those failures.

The AI Concern Gap Is Real, and Leaders Should Treat It as a Governance Signal

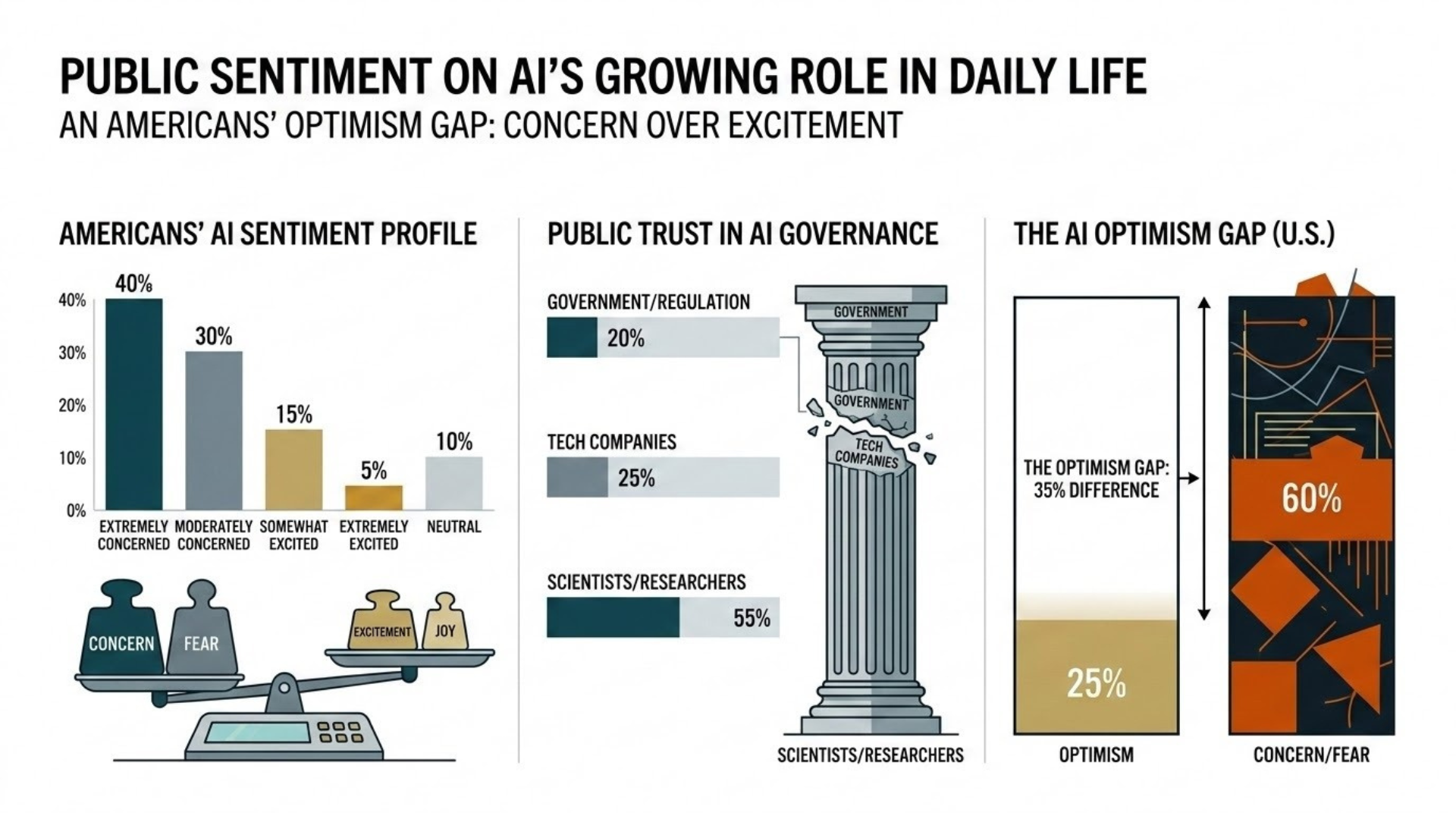

TIME cites a 2025 Pew finding: Americans are far more concerned than excited about AI’s growing role in daily life.

So, dear reader, I say treat that as a leadership input, not a PR obstacle.

A useful way to read it:

- People want more control

- They do not trust incentives

- They do not believe accountability will show up after a failure

Source: Pew Research Center summary of findings on concern vs. excitement (2025):

https://www.pewresearch.org/short-reads/2026/03/12/key-findings-about-how-americans-view-artificial-intelligence/

Start Where the Article Starts: The Physical Footprint of AI

I would say this is the most politically durable critique in the TIME piece that is not philosophical.

It is local.

People see the buildout. They feel it in electricity bills, water concerns, land-use fights, and the noise of infrastructure that arrived fast and explained itself poorly.

That critique deserves a leadership response more mature than “the cloud needs power.”

To me, a serious response sounds like this:

- Treat data center siting as a consent problem, not a permitting problem. If the only strategy is to WIN THE VOTE, expect resistance.

- Price transparency matters. If households or municipalities bear costs while companies capture upside, the backlash is rational.

- Publish commitments that can be verified. Voluntary guardrails do not build trust when incentives still point toward extraction.

If you want a number that captures how fast the resistance is hardening, Data Center Watch estimated that in Q2 2025 alone, about $98B in projects were blocked or delayed amid local opposition.

Source: Data Center Watch Q2 2025 update:

https://www.datacenterwatch.org/q22025

Or even better, check out the mini essay we wrote about this in mid 2025 that really considers the environmental impact of AI: https://www.vaseemtheaiguy.com/post/ai-environmental-impact-guilt

“I Don’t Trust the AI Industry” Is Not a Vibe. It Is Evidence.

The piece points to tactics that understandably corrode trust:

- Sexualized content

- Deepfakes

- Ads inside chat

- Monetization strategies that benefit from higher dependence

When critics say the industry is untrustworthy, the strongest rebuttal is not reassurance.

It is redesign.

So if you're smart, these three views can change the trust equation:

- Stop treating dependency as success.

Adoption that requires attachment is a business model, not a public good. - Separate capability from distribution.

Many harms come from how AI is packaged, marketed, and embedded — not from the existence of the underlying capability. - Make accountability legible.

People do not need perfect safety. They need to know who is responsible when harm occurs.

The 4 Perspectives Leaders Cannot Ignore

Perspective 1: Community Leaders Worried About Local AI Accountability

The Wisconsin candidate described in the article frames data centers as a democracy issue:

- Tax incentives

- NDAs

- Broken promises

- A long memory of public deals that did not deliver

I don't see that concern as anti-technology.

It is anti-extraction.

A leadership response that respects it has three parts:

- Public benefit must be specific — not “jobs,” but which jobs, for how long, and with what training pipeline

- Local governments need negotiating power

- Long-term stewardship matters — when a project fails, the community still owns the scar

If your AI strategy depends on secrecy and incentives that cannot be defended in daylight, your strategy is pretty fragile.

Perspective 2: Faith Leaders and Parents Worried About Teen Isolation

The pastor in the piece is not arguing that AI has no benefits.

The concern is relational: chatbots absorbing the emotional and moral conversations that used to happen with family, community, or faith leaders.

This concern has supporting data behind it.

Common Sense Media reports:

- Nearly three in four teens have tried AI companions

- Over half use them at least a few times a month

- A meaningful minority use them for serious conversations

Source: Common Sense Media, “Talk, Trust, and Trade-Offs” (July 2025):

https://www.commonsensemedia.org/research/talk-trust-and-trade-offs-how-and-why-teens-use-ai-companions

A mature response looks like guardrails that are enforceable, not aspirational:

- Age-appropriate design and verification where the product invites intimacy

- Limits on romantic or sexualized engagement patterns for minors

- Transparent escalation paths for self-harm and crisis signals

- Institutional norms that treat heavy AI companionship use as a risk indicator

This is NOT about banning AI.

It is about refusing to outsource your belonging and thinking, which we always advocate as NOT A GOOD IDEA.

Perspective 3: Organizers Linking AI to Surveillance and State Power

The Indigenous organizers in the article connect AI infrastructure to policing, detention, and state violence.

Even if you disagree with parts of the framing, the core claim is structurally true:

Like the hammer that came many moons before us, many AI capabilities are dual-use. You can use a hammer to build, or you can use a hammer to break. It's the same with AI.

A leadership response to this CANNOT be “that’s not what we intended.”

Intent is not governance.

As I have seen and witnessed through working with many regulatory bodies and board members, governance requires:

- Use-case boundaries, written and enforced

- Procurement transparency where public institutions are involved

- Auditability for high-impact systems

- Rights-preserving design that limits retention, sharing, and secondary use

Perspective 4: Tech Workers Warning About AI Monopoly and Dependence

The former Google researcher worries about dependence on a small number of monopoly providers.

This is not abstract.

In recent years, employees have publicly protested and organized around the downstream uses of cloud and AI contracts, including Project Nimbus.

Sources:

- TIME, “Google Workers Revolt Over $1.2 Billion Contract With Israel” (Project Nimbus):

https://time.com/6964364/exclusive-no-tech-for-apartheid-google-workers-protest-project-nimbus-1-2-billion-contract-with-israel/ - NPR coverage of Google firings tied to the protests:

https://www.npr.org/2024/04/19/1245757317/google-worker-fired-israel-project-nimbus-cloud-protestor

If you want AI to strengthen internal capability rather than create a new dependency, I think you can build three things:

- Model pluralism where it matters

- Human review norms that keep responsibility visible, front, and center

- Internal AI fluency that teaches teams how to question outputs, not just generate them.

For the last piece, Anthropic advocates for the concept of Discernment.

Source: Anthropic, “The 4 D's: Discernment” (2025): https://www-cdn.anthropic.com/d8ba4eda6eed65f193be549d49385006de8b7119.pdf

Perspective 5: Creatives Resisting “Volume” and “AI Slop”

The filmmaker in the story argues that AI can increase volume, but incentives push studios toward cheap output.

Even if you believe AI can contribute creatively, leaders still have to decide what they are optimizing for.

A leadership response is to draw clear lines:

- Consent for likeness and voice is non-negotiable

- Provenance and disclosure should be supported where feasible

- Creative work should not be reduced to “more output, faster”

If your adoption story is “we can replace craft with throughput,” you are not describing innovation.

You are describing a quality collapse.

A co-founder friend of mine whose name is Dhruv has actually written a lovely thoughtpiece on this very subject that he has titled "The Tragedy of Ophelia." - https://docs.google.com/document/d/1wUQImiR5sZGExM7b3SIwB_extYdO8SQL/edit#bookmark=id.gbcuu9iyqz

He had written this manifesto in Sao Paulo, Brazil, while incubating the development of his AI app that goes by the same name.

For the actual app, check out -- Ophelia: A New Age For Creatives: http://ophelias.ai

Fun Fact: All the images in this post were made with Ophelia!

Perspective 6: Nurses Insisting Safety-Critical Work Cannot Be Rushed

The nurse in the article makes the most operationally important point:

Frontline care involves intangibles and context sensing, and AI tools can create new risks if inserted without testing and human control.

This concern is echoed by organized nursing groups, and I've seen this more and more evident in medical practice, both in reality and in fictional shows like The Pitt.

In 2024, National Nurses United reported survey findings that automated handoffs and AI tooling can mismatch nurse assessments and degrade patient safety.

Source: National Nurses United press release (May 15, 2024):

https://www.nationalnursesunited.org/press/national-nurses-united-survey-finds-ai-technology-undermines-patient-safety

In healthcare — and any safety-critical environment — the correct posture is simple:

- AI DOES NOT REPLACE professional judgment. It supports it.

- No tool reaches patients without rigorous testing.

- Frontline workers have a say — not as a courtesy, but as a safety requirement.

If an AI system touches decisions that can harm people, your rollout is not a feature launch.

It is a governance event.

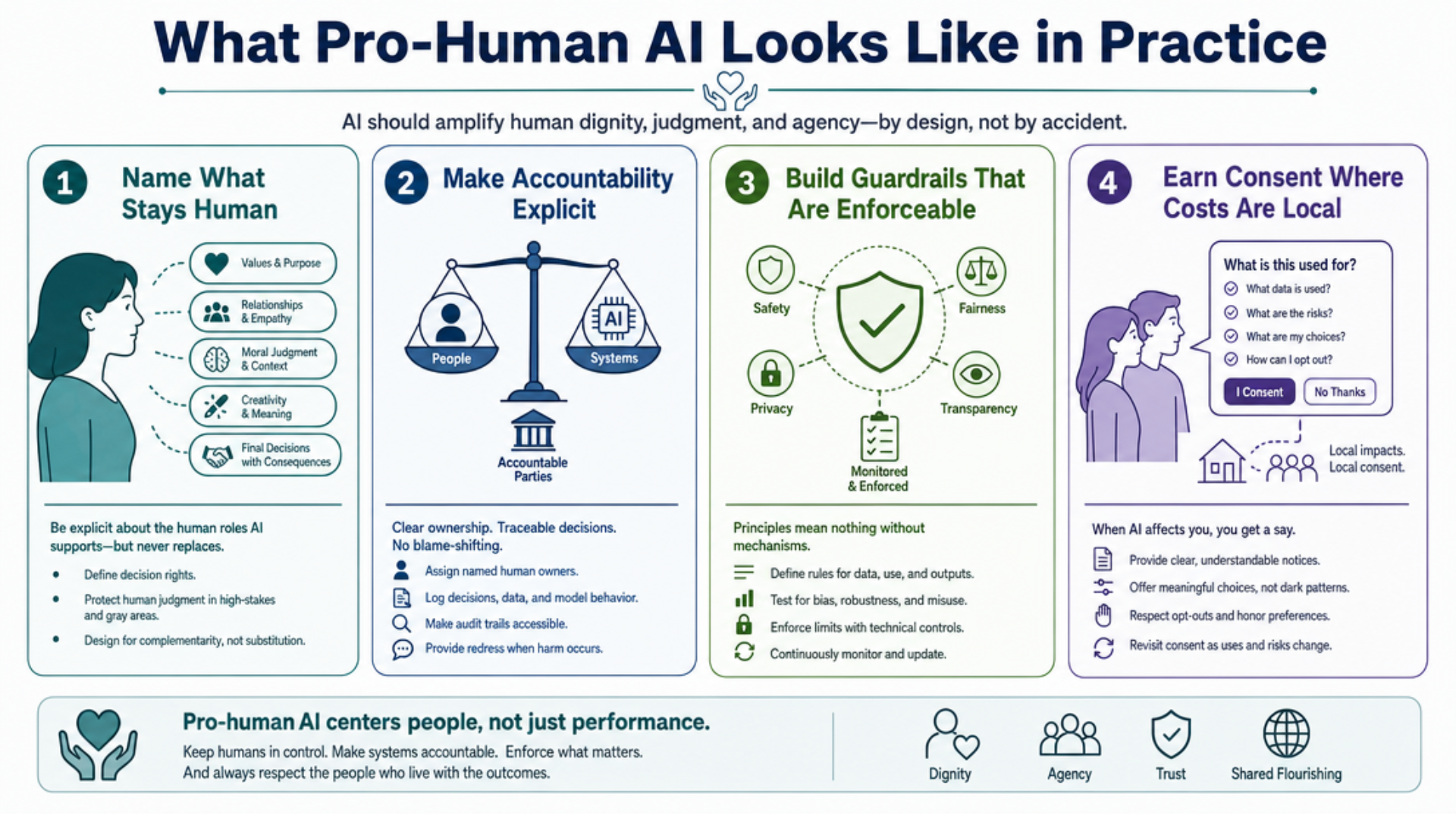

So, Vaseem, What Does Pro-Human AI Looks Like in Practice?

My intent behind the rebuttal to “The People vs. AI,” was not meant to be a cheerleading essay.

It was intended to be a leadership blueprint.

So, here it is...

1. Name What Stays Human

Write it down. Publish it internally.

Some basic examples:

- Hiring decisions remain human

- Performance decisions remain human

- Safety decisions remain human

- Discipline decisions remain human

- Any decision with irreversible harm remains human

AI can inform.

It cannot own.

Keep the human in the loop.

2. Make Accountability Explicit

For every AI-enabled workflow, identify:

- The accountable owner

- The failure modes you will monitor

- The escalation path

- The stop rule

When people do not know who owns outcomes, they assume nobody does, and that becomes extremely problematic!

3. Build Guardrails That Are Enforceable

Not principles.

Controls.

That means:

- Approved AI use cases

- Data-handling boundaries

- Model access governance

- Audit mechanisms for high-impact outputs

4. Earn Consent Where Costs Are Local

If your AI strategy has a physical footprint, you are in community politics and those deeply polarizing conversations whether you like it or not.

Treat it with respect, own the duty, then remain kind and calm in your interactions.

5. Teach Judgment, Not Prompts

Most organizations rush to training that teaches how to use the tool.

The missing skill is judgment:

- How to question outputs

- How to detect uncertainty

- How to triangulate

- How to remain accountable

AI Adoption that skips judgment is adoption that creates backlash inside the organization.

My Quiet Conclusion

The backlash described by TIME Magazine is not a reason to stop using AI altogether.

I think it actually further increases the demand to use it in a more meaningful and responsible way.

You see,

AI amplifies what's already inside you.

Curiosity. Creativity. Kindness.

It amplifies that too.

But if you carry fear ⟶ it amplifies that instead.

So my demand for leaders is to:

- Stop hiding behind inevitability

- Stop outsourcing responsibility to vendors

- Stop treating human consequences as someone else’s domain

If you do that, AI can be what it should have been from the start: A tool that expands human capacity while keeping human responsibility intact.

And if you want to intellectually discuss how to do that in a SAFE & BRAVE way...